Security Engineering Is a Context Problem

Security work isn't building — it's a permanent triage queue. The bottleneck isn't tooling or knowledge. It's context. Part 1 of a series.

A founder I was advising recently sent me a screenshot. 4,200 open findings in their scanner. “Where do I even start?”

I asked the grounding questions. Which ones are exploitable? Which assets are internet-facing? Which services hold customer data? Which findings are duplicates of the same root cause? Who owns each repo?

He didn’t know. The scanner didn’t know either. That’s not the scanner’s job.

The scanner’s job was to find things. It found 4,200. The job nobody had done was figuring out what any of them meant.

That, in one screenshot, is what I want to talk about.

The thesis

Security engineering, the way it’s actually practiced inside most companies, is a permanent triage queue.

A finding shows up. Something is wrong, or might be wrong. You decide if it’s real, if it matters, who owns it, what breaks if you fix it, what breaks if you don’t. Repeat ten thousand times.

If that sounds like software development with the polarity flipped — devs build to make features exist, security folks investigate to make problems go away — that’s because it is. And that flipped polarity has a consequence almost nobody talks about explicitly:

The bottleneck in security is not knowledge. It’s not tooling. It’s context.

A senior engineer triaging a finding doesn’t usually fail because they don’t know what SQL injection is. They fail — or just go slowly — because they don’t know whether this SQLi reaches the database, whether this input is attacker-controlled, whether this service holds anything worth exfiltrating, whether this fix breaks the API for the team that owns the repo.

Knowing the exploit class is the easy 20%. Reconstructing the context around it is the 80%. SQL injection in user.go:142 is trivial to flag. Knowing whether that function is reachable from an internet-facing route, whether the input is attacker-controlled, and whether the affected table holds anything worth exfiltrating — that’s the part that eats hours.

”But security teams build things”

Yes, and look at what they build.

Scanners. SIEMs. SOAR playbooks. ASPM. EDR. Detection rules. Triage dashboards. Asset inventories. Risk scoring. Internal CLI tools to enrich tickets.

Every one of those is infrastructure for the find-and-fix loop. Security teams ship code, but the code we ship is in service of triage — finding things faster, sorting them better, routing them to the right person, killing duplicates, prioritizing the ones that actually matter.

We are not building product. We are building tools to feed and clear the queue.

That’s not a complaint. It’s just an honest description of what the discipline actually does. And it doesn’t break the thesis — it deepens it. Even our build work is downstream of context. A scanner with no context produces a backlog of 4,200 tickets. A scanner with context produces 12 tickets that actually need a human.

The dev analogy that finally makes it click

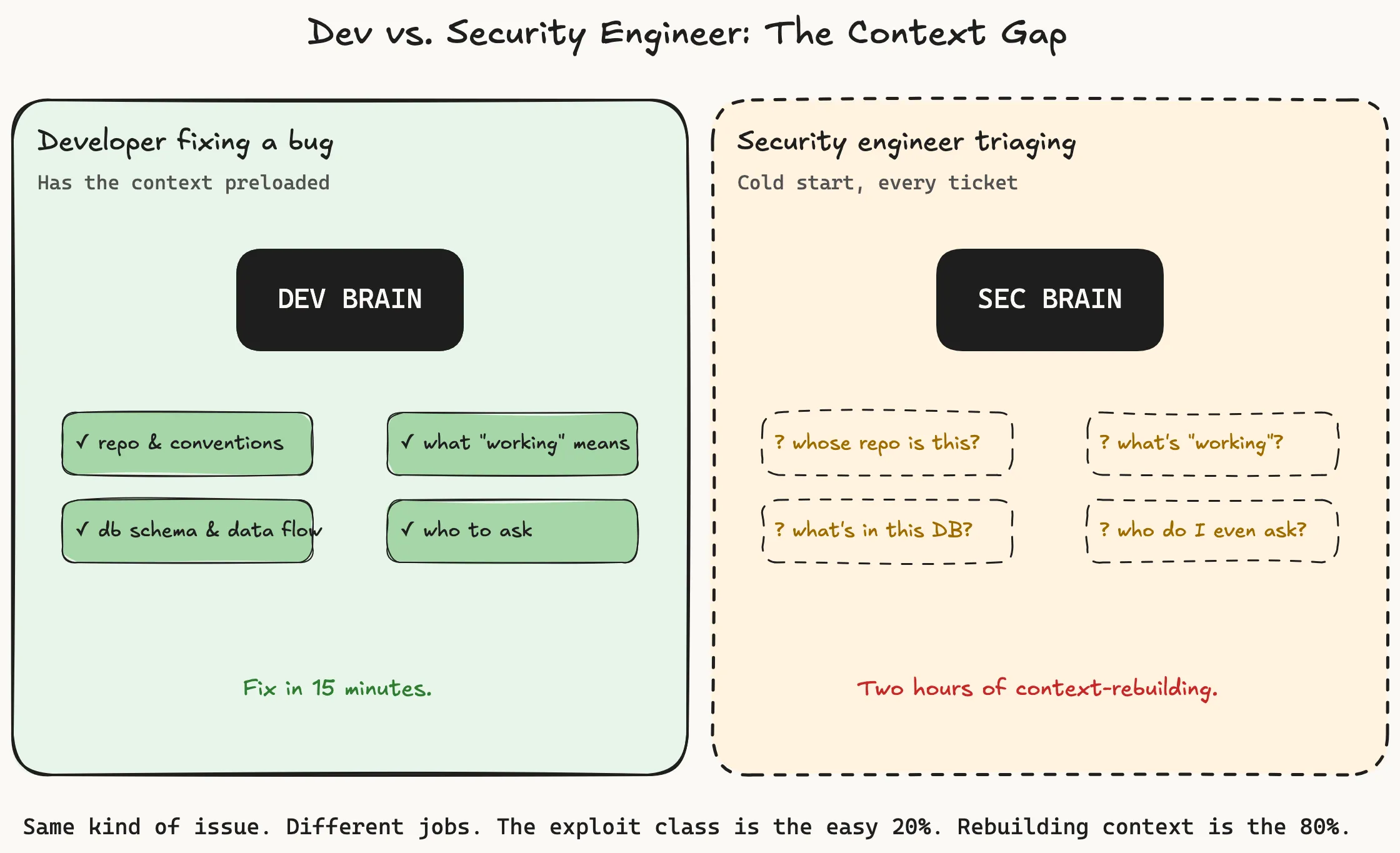

When a developer sits down to fix a bug in their own service, context is preloaded. They know:

- The repo and where things live

- Their team’s conventions

- What “working” means

- What’s in the database and what flows through this code path

- Who to ask when they don’t know

A security engineer triaging the same kind of issue starts from a cold version of all of that. The context exists — somewhere. In the owning team’s head. In the deploy log. In the runtime. In the original architecture doc that nobody updated. But it’s not loaded the way the dev’s is. Different service every ticket. Different team. Different threat model. Different data sensitivity. Different owners. Different definition of “working.” The job is reassembling that, fast, from outside.

This is why a developer can fix a bug in fifteen minutes and a security engineer can spend two hours triaging “the same” issue and still get it wrong. They’re doing different jobs. The dev is fixing a bug they already understand. The security engineer is re-acquiring the context the dev already had, cold, from outside.

Most of a security engineer’s day is not the exciting “hack things” version of the job. It’s: is this real, does it matter, who owns it, what’s the blast radius, can we fix it without breaking something.

That is, all of it, context work.

Why scanners make this worse, not better

Here’s the trap the industry walked into.

Scanners are amazing at finding things. They are structurally limited at context — and that’s a design choice, not an indictment. The thing that makes a scanner cheap and fast is exactly the thing that strips context from its output. SAST sees user.go:142 doing string concatenation into a SQL query. It doesn’t natively see whether that path is reachable from an internet-facing route, whether the input is attacker-controlled, what’s in the database, or whether anyone uses this code anymore.

Some tools have started closing this gap — govulncheck does reachability for Go, KEV-aware scanners prioritize CVEs that are actually being exploited, and runtime tools tie findings back to whether the code path ever fires in prod. These are real and they’re getting better. But the default output of the scanning ecosystem is still context-stripped, and most teams’ pipelines are wired against that default.

So you get a finding. The finding is context-stripped on purpose — that’s what made it cheap to generate.

Now you have 4,200 of them. Generating context-stripped findings is roughly free. Re-attaching context to each one is expensive and human-bound.

The math is brutal: tools generate findings faster than humans can rebuild context. So backlogs grow. The bigger they grow, the harder triage becomes, because now you’re not just rebuilding context per finding — you’re also fighting noise, duplicates, and the ambient dread of an unknowably large queue.

Detection has had two decades of investment. Context-building has had a fraction of that — even though that’s where the bottleneck has migrated. Then we wonder why every CISO feels underwater.

Where the context gap actually lives

Three places I keep seeing this play out — these are also the use cases I’ll dig into in the rest of this series.

🚨 Security operations / alert triage

An alert fires. Login from a new country. Suspicious process on a host. Outbound connection to an unfamiliar domain.

To know if it’s real, you need:

- What does normal look like for this user / host / service?

- What’s the asset’s criticality?

- Are there correlated signals on adjacent systems?

- Was there a recent deploy or config change?

- Has this user travelled before?

Without that, every alert is a 30-minute investigation. With it, most resolve in seconds. Same alert, same analyst, two completely different jobs — and the difference is entirely context.

This is why SOC analysts burn out. They’re being asked to do a context-heavy job in an environment that gives them no context.

☁️ Cloud security findings

“S3 bucket is public.” Cool. To know if it actually matters:

- Is it intentional? (Some are — that’s literally how you serve a static site.)

- What’s actually in it?

- Is this account dev, staging, or prod?

- Is the bucket actively used, or did someone create it in 2019 and forget?

- Who owns it now that the original team got reorged?

“This IAM role is over-permissioned.” Sure. To know if it actually matters:

- Is the permission actually used?

- What’s the principal?

- What’s the blast radius if compromised?

- Is the role attached to a Lambda that runs once a quarter, or to every pod in a 200-node cluster?

The finding is trivial. The finding is the easy part. Figuring out whether it matters in this cloud, this account, this organization, with this data — that’s the entire job. And it’s nearly all context work.

This is why the vast majority of “cloud security” tickets sit untouched. Not because nobody cares — because the context to act on them is scattered across the person who set up the resource, the team that inherited it, the runtime, and three Slack threads from 2022. It exists; it’s just not anywhere the person triaging the ticket can reach.

📦 AppSec / vulnerability management

CVE in a transitive dependency. Critical CVSS. Your scanner files a ticket.

To act on it, you need:

- Is the vulnerable function actually called?

- Is the affected code path reachable from a public endpoint?

- Is this service tier-1 (customer data) or tier-3 (internal tooling)?

- Is this dependency pinned because of a known incompatibility, or because nobody got around to bumping it?

A “critical” CVE in unreachable code on an internal admin tool is a Friday afternoon problem. The same CVE in your auth service is a “page someone now” problem. The CVSS score doesn’t tell you which. Context does.

The reason vuln management is broken at most companies is not that they don’t have a scanner. It’s that the scanner emits CVE-shaped findings and the world demands risk-shaped decisions, and the gap between those two shapes is filled with — exactly — context.

Why this framing matters

If you accept that context is the bottleneck, a bunch of things follow.

The leverage isn’t more scanners. It’s anything that closes the context gap. Asset inventories that are actually accurate. Code ownership maps that aren’t six months stale. Reachability analysis. Data classification you can query. Runtime telemetry tied back to the code that produced it. Business-context tagging. Owner discovery.

Most security teams are still buying detection. They should be buying context.

Attach context at detection time, not at triage time. This is the move I see most teams miss. When you onboard a scanner or write a detection rule, the work isn’t done when it fires — it’s done when whoever picks up the resulting ticket can act on it in under a minute. That means defining, upfront, what context an analyst will need: which owner, which environment, which recent deploys, which adjacent signals. Bake that into the alert at the moment of detection. The findings that come out the other end are then risk-shaped, not CVE-shaped, and triage stops being archaeology.

Hiring shifts. The senior security engineer’s most valuable skill stops being “knows lots of vulnerability classes” and becomes “can ramp on an unfamiliar codebase fast.” The first is increasingly commoditized. The second compounds.

AI fits naturally here. This is the part I’ll keep coming back to in this series. The dirty secret of LLMs is that they are very good at the boring half of the work — reading code, summarizing infra, walking call graphs, correlating tickets, asking dumb-but-necessary questions across a codebase. The half humans hate. The half that is the bottleneck.

Whether or not you trust an LLM to make a final call (you probably shouldn’t, yet), you should trust it to do the context-gathering legwork that takes a senior engineer two hours and a junior engineer six. Reframing the security problem as a context problem is what unlocks the framing for AI to actually be useful here, instead of just generating more noise.

The honest counterpoint

Not all of security is reactive. Threat modeling, secure-by-default platforms, paved roads, identity infra — these are real, proactive, “we built something so the bad thing can’t happen” work. That kind of work is some of the highest-leverage stuff a security team can do.

But — and this is the part I’d push on — even that work fails without context. You can’t threat-model a system you don’t understand. You can’t build a paved road if you don’t know what roads people are currently driving on. The whole “secure-by-default” pitch assumes you know what defaults developers are reaching for, what the data flows look like, what the failure modes are. Context, again.

So even when security teams genuinely build, the leverage on what they build is gated by how well they understand the org they’re protecting. Same problem, one layer up.

Where this series is going

This was Part 1: the framing.

In the next posts I’ll get concrete. Each one takes a use case and walks through how I’m actually trying to solve the context problem for it — what I’m building, what works, what didn’t. Starting with security operations triage and AWS / cloud findings, since those are the ones I keep getting pulled into.

If you’ve ever stared at a 4,000-finding backlog and felt the specific exhaustion of “I don’t even know which of these to look at first” — that’s the feeling I’m trying to design against.

The thesis isn’t that security is hopeless. It’s that we’ve been trying to solve the wrong half of the problem.

The detection half is solved. The context half is wide open.

That’s where the next decade of security engineering gets built.

Part 1 of a series on rethinking security engineering as a context problem. Next up: how I’m building for security operations triage. If you have war stories — backlogs, alerts, AWS findings, the whole genre — I’d love to hear them.

Find me on Twitter.

Idea, framing, and edits: Aseem. Drafting assistance: Claude.

Big thanks to Nemo for the sharp review and the suggestions that made this post meaningfully better.

Comments